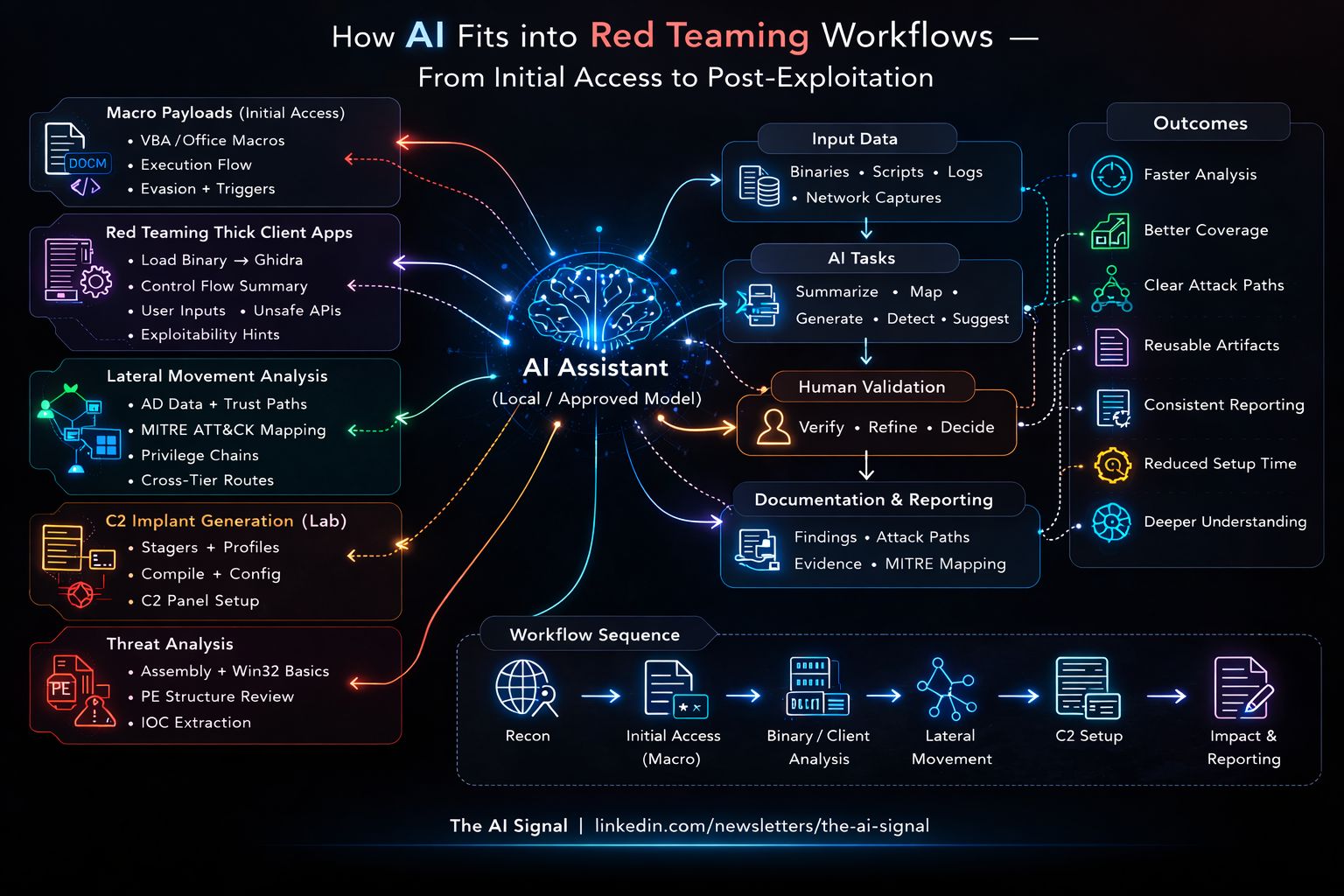

Where AI Fits in Red Teaming Workflows

A recent discussion with a few red team leads kept coming back to one question: where does AI actually fit in red teaming workflows? Not as a replacement for tradecraft, but as a tool that speeds up the right parts of the job. The answer is not “everywhere,” and it is not “nowhere.” It is in specific, repeatable tasks where you still own the reasoning and the outcome. Below are the areas we found most practical, plus how to use AI without giving up control or client trust.

Macro Payloads and Initial Attack Chains

Crafting macro payloads for documents as part of an initial attack chain is one practical area. Instead of manually building VBA structures, adjusting execution flow, or refining trigger logic, AI can help generate clean macro templates quickly. You still control the logic, execution path, and safety boundaries. It accelerates development, but does not replace understanding of how Office, process spawning, and detection controls work.

Thick Clients and Binary Triage

Red teaming thick client applications is another strong area. Binary triage is as well. When you load a binary into Ghidra, the first level of understanding takes time. If you extract specific functions and ask a model to summarize control flow, trace user-controlled inputs, or point out unsafe memory handling, it can speed up the early analysis. You still verify everything yourself. You still confirm exploitability. But you understand the code faster.

Lateral Movement Analysis

Lateral movement analysis in a Windows domain becomes complex when the environment is large. Local admin memberships, delegation settings, active sessions, trust relationships. It is not easy to mentally connect all of this. You can feed structured data into a model and take MITRE ATT&CK as a reference to construct possible movement paths across techniques and trust boundaries. It can highlight privilege chains and cross-tier access routes. You then validate what is actually possible and in scope.

C2 and Lab Setup

Making simple C2 implants in lab environments is another example. Instead of manually wiring Sliver profiles, writing custom stagers, tweaking compile flags, and setting up a quick control panel, AI can help generate a basic structure faster. It reduces setup effort. GhostLink is a C&C platform for red team engagements in this space: AI can help with boilerplate, config generation, and wiring up observers or control panels so you spend more time on operator workflow and OPSEC and less on repetitive setup. But OPSEC awareness, detection impact, and responsible usage remain with the operator.

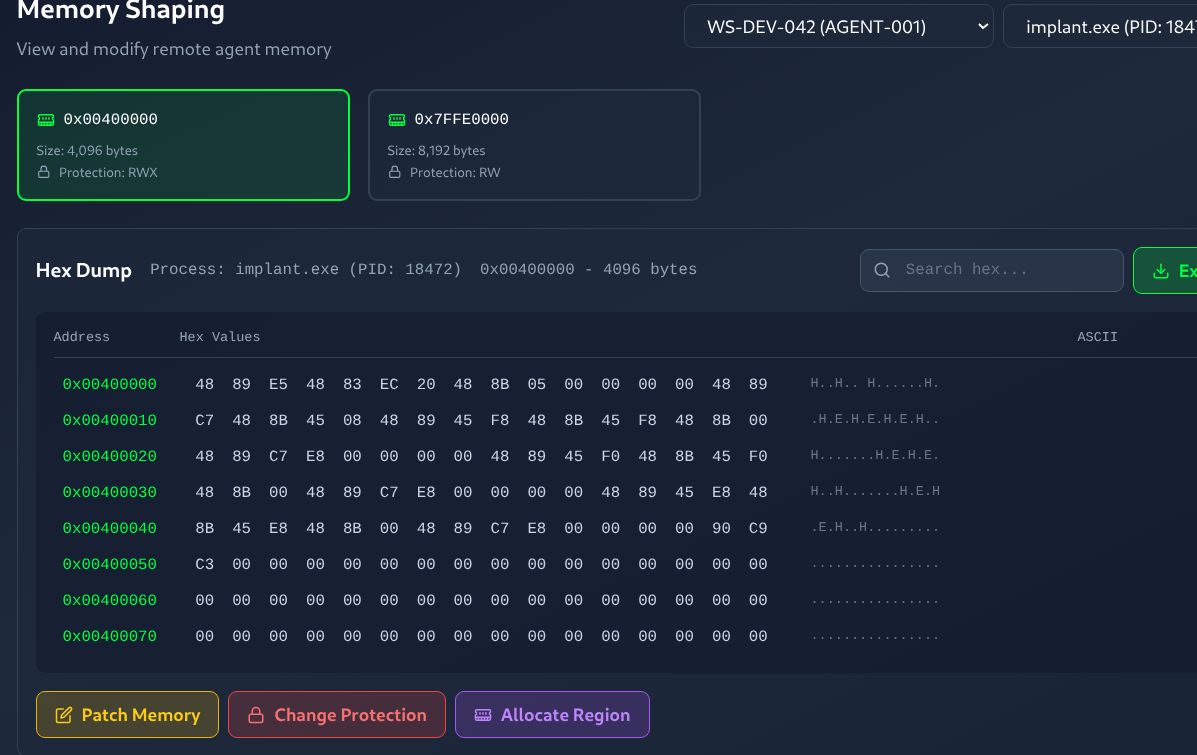

Editing remote process file on the host where the C2 agent runs.

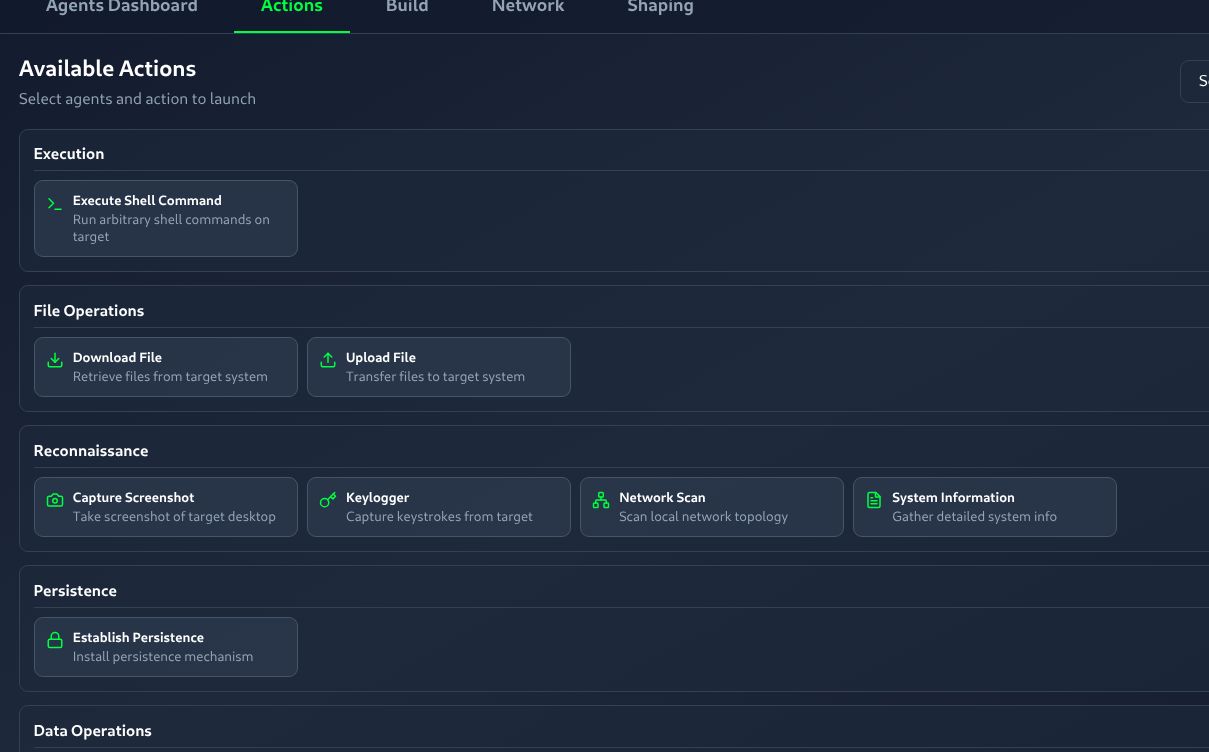

Launching actions from the control panel: download file, upload file, take screenshot on the machine where the C2 agent runs.

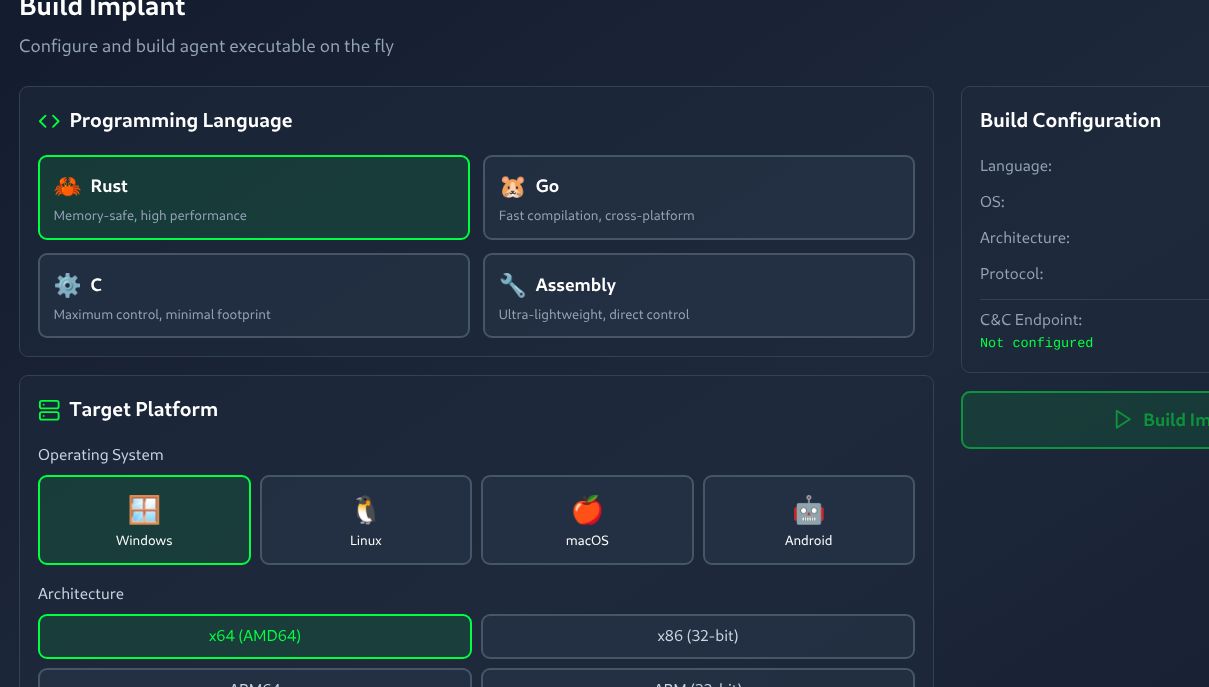

Compiling and building agents or implants for different architectures and OS platforms.

Observability and debugging for the C2 platform.

Caution and Guardrails

One caution. This is not about blindly copy-pasting into ChatGPT or casually using AI assistants. You must understand what you are doing. In red teaming, data sensitivity and client trust matter. I clearly advise a few conditions. Prefer local models wherever possible. Use synthetic or sanitized data for experiments. Put proper guardrails in place. Build a small observability layer to log prompts and outputs. And if the customer already has approved AI models in their own cloud environment, it is better to use their endpoints within their boundary instead of moving data outside.

The Real Advantage

AI does not replace red team skill. It reduces time spent on repetitive work and early analysis. The real advantage still belongs to people who understand authentication flows, privilege boundaries, operating system behavior, and trust relationships.

If you know what you are doing, AI makes you faster. If you do not, it only makes you confident without depth.